💻 What clunky computers from old sci-fi can teach us about economic decision-making today

They provide a fascinating image of an alt-history created by different societal choices about technology

Quote of the Issue

“The American Dream is that dream of a land in which life should be better and richer and fuller for everyone, with opportunity for each according to ability or achievement. [Not] a dream of motor cars and high wages merely, but a dream of social order in which each man and each woman shall be able to attain to the fullest stature of which they are innately capable, and be recognized by others for what they are, regardless of the fortuitous circumstances of birth or position.” - James Truslow Adams

The Essay

💻 What clunky computers from old sci-fi can teach us about economic decision-making

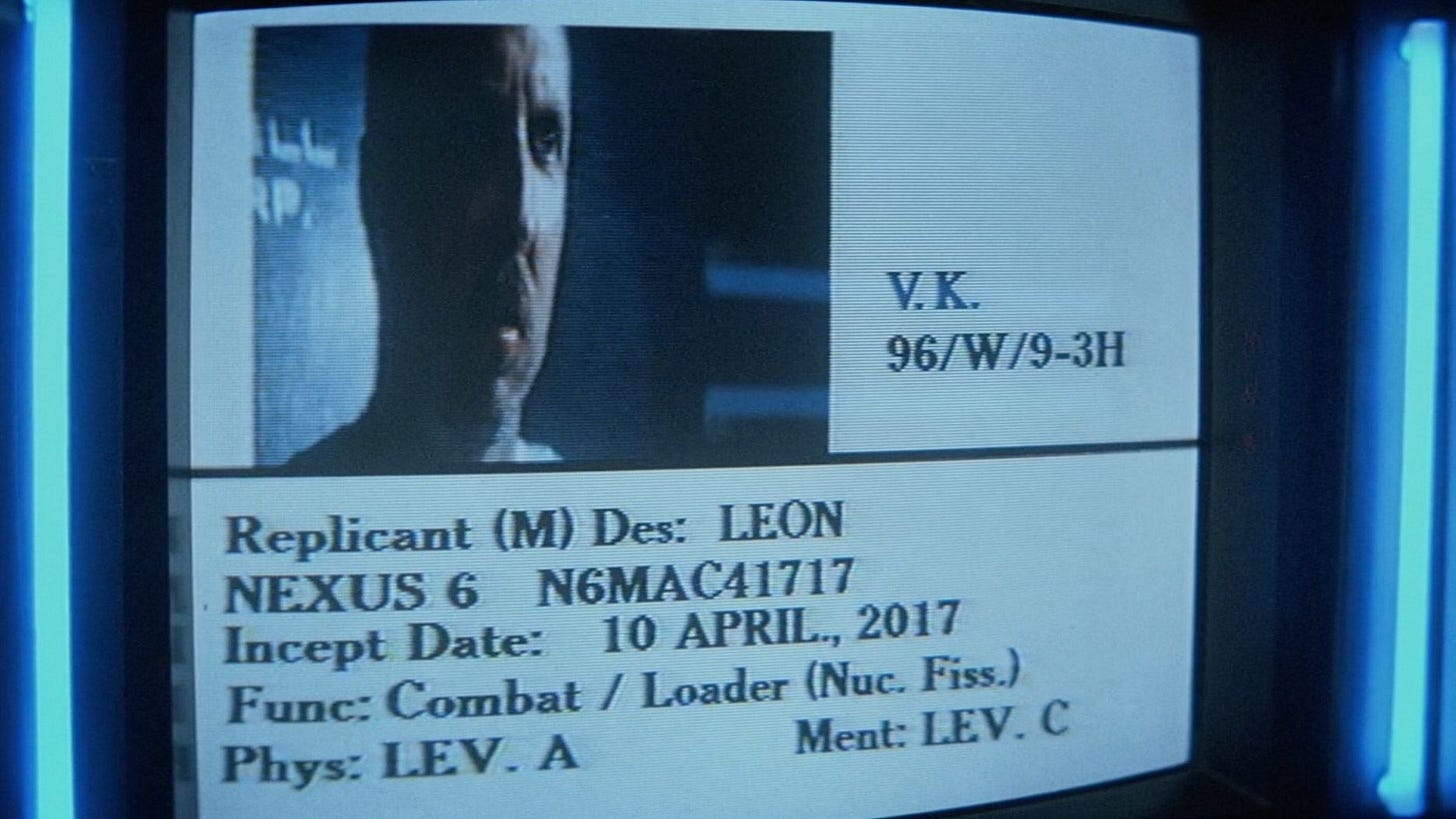

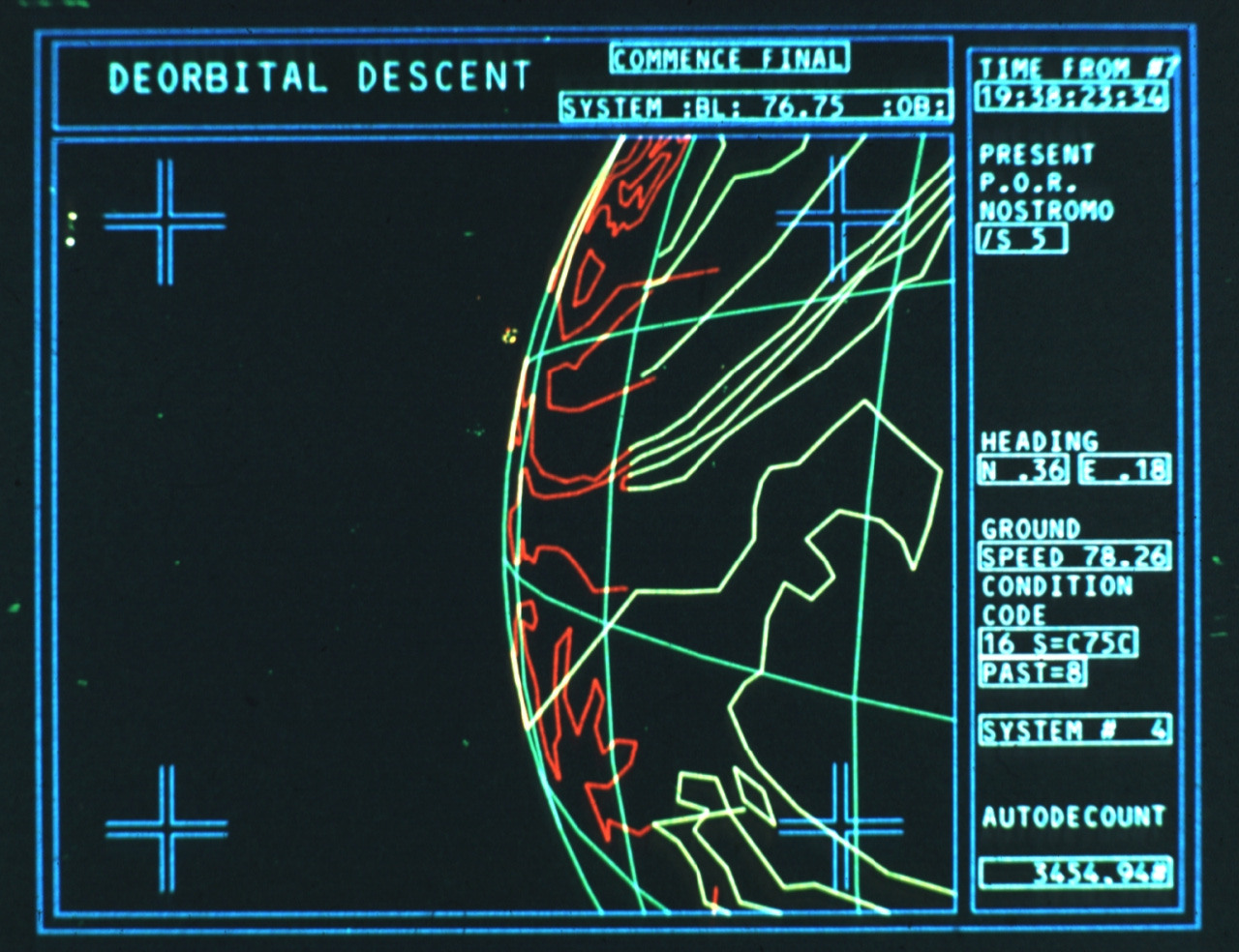

Fans (including me) of the Alien(s) and Blade Runner film franchises like to imagine the films occupy the same cinematic universe. (The original films were directed by Ridley Scott, and he’s confirmed a loose connection between them.) Both are set in seemingly dystopian futures where humanity has colonized other planets. There’s a lot of 2001: A Space Odyssey in their attempt to show a realistic future without laser guns and faster-than-light travel. Alien(s) and Blade Runner also share a similar design and visual aesthetic: dark, gritty, and industrial where interiors are cramped, claustrophobic, and poorly lit. The Nostromo spaceship in Alien suggests a flying oil rig, it’s been said, rather than a sleek cruiser.

Some fans also like to include the lesser-known 1981 film Outland in the mix. It stars Sean Connery as the new marshal assigned to a mining colony on Io, a moon of Jupiter. It’s also grim, gritty, and industrial with a “used” world look to it. And like those other two films, the technology seems realistically extrapolated from the technology of today, or at least the technology of the 1970s and early 1980s.

Indeed, the one bit of tech that always seems to date a sci-film, even of one of the fairly recent past, are clunky-looking computers and boring, simplistic interfaces. These are worlds without high-definition, flat-screen monitors and a variety of colors and fonts to choose from. Where are all the smartphones? The explanation: Predicting the future is hard, not to mention that less sophisticated computer graphics back then made it more difficult to depict the future. These films are inescapably products of their time. And visually, the depictions of computers work to reinforce the overall industrial aesthetic.

But there’s also a plausible in-universe explanation for the lack of progress when it comes to information technology, one that’s relevant to real-world economic history. Consider: In our world, we’ve experienced huge progress in IT over the past half-century or so. As captured by Moore’s Law, the number of transistors per microchip has increased to 50 billion from 100 in the 1960s. “In simple terms,” explains Bloomberg columnist Tim Culpan, “the density rate added a zero every 3.5 years.” GPS, the internet, notebook computers, smartphones, and the recent breakthroughs in AI reflect Moore’s Law.

But tech progress outside the Information and Communication and Technologies Revolution, in the world of atoms rather than bits, has been less impressive. Americans haven’t left low-Earth orbit since December 1973, the same year the FAA banned overland supersonic commercial flight. Space launch costs were for decades stuck until SpaceX. Flying from Los Angeles to New York or across the Atlantic Ocean is only as fast as it was 40 years ago. We get no greater share of energy from nuclear power than we did 40 years ago. As Northwestern University economist Robert Gordon told me back in 2016:

We’ve had plenty of inventions since 1970 but it’s been focused on the narrow sphere of entertainment, information, and communications technology. That means everything associated with the television, including time shifting through VCRs and DVRs; computing, going from the mainframe through mini-computers and personal computers through to the laptop and the smartphone; and the mobilization of communication, moving from the landline phone to the dumb mobile and now the smart mobile phone. Those innovations are everything that we talk about today, but in perspective they’re just a small slice of what human beings care about. … The progress we’ve achieved has been more narrowly focused in a smaller part of the economy.

I’ve frequently written about why tech progress has been so narrow and slow: too little radical R&D and too much repressive regulation are at the top of my list. For decades, it’s been a lot easier to innovate in the world of ICT than in energy, space, and transportation.

But maybe the America of those films I just mentioned made a different sociopolitical choice and the energies of entrepreneurs, technologists, and scientists were directed toward a broader range of technologies. Maybe IT progress wasn’t as fast in those worlds, but perhaps sit was faster in other areas. One example of a sci-fi creator making technological choice an explicit part of the story is the early 2000s reimagining of Battlestar Galactica by Ronald D. Moore where humans have rejected AI to avoid another robot uprising. Likewise, Star Trek’s Federation bans genetic engineering due to an earlier global war with enhanced superpeople.

But even in the more technologically diverse reality of Alien(s) and Blade Runner, the lack of IT progress may be more stylistic than substantial given that both have highly sophisticated humanoid androids that certainly seem to possess human-level intelligence. I would like to think what’s true in those films could also have been true for us: advancing significantly in ITC wouldn’t have prevented us from advancing significantly in other areas as well. Indeed, isn’t that what we are seeing right now with big progress across a range of technologies? Even better we are seeing combinatorial impacts where advances in IT are helping boost advances in biotech, energy, and space.

The real good news is that the dystopian futures shown in Alien(s), Blade Runner, and Outland are unlikely. A civilization with the technological know-how to travel across the Solar System and build human-level AI is likely to be one a mass abundance and widespread prosperity. Too bad Hollywood too infrequently makes compelling films that assume what is the more likely economic outcome.

Micro Reads

▶ What if There Was Never a Pandemic Again? - Jassi Pannu and Jacob Swett, NYT Opinion | The next-generation pandemic prevention technologies are on our doorstep, and building these tools into our environment could make Covid-19 the world’s last pandemic. But to realize this future, we need to commit to viewing pandemic prevention as just as much of a political priority as pandemic response has been.

▶ JPMorgan eems to be working on a ChatGPT-style tool that will enable AI-powered investing - George Glover, Business Insider |

▶ Multiplying Solar and Battery Factories Put Net Zero in Closer Reach - Nathaniel Bullard, Blomberg |

▶ Why tech giants want to strangle AI with red tape - Schumpeter, The Economist | If big tech uses regulation to fortify its position at the commanding heights of generative ai, there is a trade-off. The giants are more likely to deploy the technology to make their existing products better than to replace them altogether. They will seek to protect their core businesses (enterprise software in Microsoft’s case and search in Google’s). Instead of ushering in an era of Schumpeterian creative destruction, it will serve as a reminder that large incumbents currently control the innovation process—what some call “creative accumulation”. The technology may end up being less revolutionary than it could be.

▶ New superbug-killing antibiotic discovered using AI - James Gallagher, BBC |

▶ Waluigi, Carl Jung, and the Case for Moral AI - Nabeel S. Qureshi, Wired |

▶ What performance-enhancing stimulants mean for economic growth - The Economist | Towards the end of last year America began running short of medicines used to treat attention-deficit hyperactivity disorder (adhd), including Adderall (an amphetamine) and Ritalin (a central-nervous-system stimulant). Nine in ten pharmacies reported shortages of the medication, which tens of millions of Americans use to help improve focus and concentration. Around the same time, something intriguing happened: American productivity, a measure of efficiency at work, dropped. In the first quarter of 2023, output per hour fell by 3%. … Coincidence? It often seems like half of Silicon Valley, the most innovative place on Earth, is on the stuff. And surprising things can cause gdp to rise and fall, including holidays, strikes and the weather. What’s more, the economic history is clear: without things that give people a buzz, the world would still be in the economic dark ages.

▶ Scientists find way to make energy from air using nearly any material - Dan Rosenzweig-Ziff, WaPo |

▶ How Nvidia created the chip powering the generative AI boom - Tim Bradshaw and Richard Water, FT | That technological leap is set to be accelerated by the H100, which is based on a new Nvidia chip architecture dubbed “Hopper” — named after the American programming pioneer Grace Hopper — and has suddenly became the hottest commodity in Silicon Valley.

▶ The AI Boom Runs on Chips, but It Can’t Get Enough - Deepa Seetharaman, WSJ |

▶ The staggering cost of a green hydrogen economy - Camilla Paladino, FT Opinion | Some 1,000 new projects globally have been announced to date, requiring total investment of $320bn, according to the Hydrogen Council, an industry body whose members include oil companies such as BP and carmakers like BMW Group. Would-be developers, however, have only committed $29bn so far.