🚀 Faster, Please! Week in Review #40

ChatGPT, 'cornucopianism,' WWII and productivity growth, and much more

My free and paid Faster, Please! subscribers: Welcome to Week in Review+. No paywall! Thank you all for your support! For my free subscribers, please become a paying subscriber today. (Expense a corporate subscription perhaps?)

Melior Mundus

In This Issue

Essay Highlights:

— ChatGPT and technological surprise: China edition

— In defense of 'cornucopianism' and a more populous planet

— Would America's 20th Century postwar boom been stronger without, you know, the war?Best of 5QQ

— 5 Quick Questions for … economist Parker Rogers on FDA regulation

— 5 Quick Questions for … Tony Mills on metascienceBest of the Pod

— A conversation with economist Avi Goldfarb on the disruptive economic potential of AI

Essay Highlights

🤖 ChatGPT and technological surprise: China edition

The seemingly sudden emergence and societal splash of generative AI have not gone unnoticed by China. According to an excellent piece in The New York Times by reporter Li Yuan, Microsoft’s experimental chatbot has left Chinese tech entrepreneurs “shocked and demoralized. It has dawned on many of them that despite the hype, China lags far behind in artificial intelligence and tech innovation.” While many journalists and experts have framed the issue as the challenge from Chinese-style industrial policy, less commented upon was the challenge of industrial policy. Government direction of private economies has a spotty track record at best. Communist Beijing’s fixation on censorship has been its most potent tool to curb the spread of unwelcome ideas and dissent, but it is now stifling AI progress. Data, the lifeblood of AI, is increasingly hard to come by in China's censored online environment, making it difficult to develop technologies like ChatGPT. Maybe “smart” central planning and lots of government investment isn’t enough to broadly push forward the tech frontier — and maybe even slows progress in some areas. Something to consider as America embraces its own version of industrial policy because of China.

⤴ In defense of 'cornucopianism' and a more populous planet

Population worriers never see potential, only problems. They always think the wrong sorts of people are having too many babies. Let me offer another view: Economic growth — a greater ability to turn our dreams into reality — is driven by people discovering new ideas. It’s my guess that the more smart humans we have — assisted by smarter and smarter tech and operating in a basic environment of economic freedom — the faster tech progress and economic growth will be, benefitting all of us. Setting aside demographic trends that show global population plateauing by century’s end, past plans for slowing population growth provide ample reason for concern. But in a recent Scientific American essay, “Eight Billion People in the World Is a Crisis, Not an Achievement,” Naomi Oreskes, a science historian at Harvard University, describes “cornucopianism”: a “label given to individuals who assert that the environmental problems faced by society either do not exist or can be solved by technology or the free market.” This strikes me as a clumsy attempt to discredit opponents as a bunch wild-eyed, extreme libertarians. Most of the pro-progress folks I know certainly see a role for government, from a safety net to funding basic science, if not more.

💥 Would America's 20th Century postwar boom been stronger without, you know, the war?

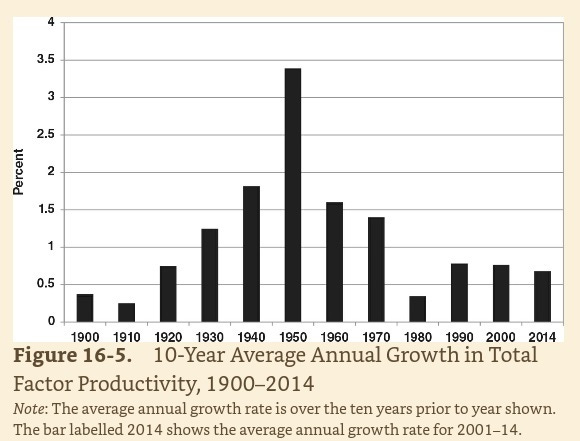

The above image comes from The Rise and Fall of American Growth by Northwestern University economist Robert Gordon. It shows a surge in total factor productivity growth during the middle decades of the century, the 1920s through 1960s, raising the question of how World War II affected the postwar economy. In his book, Gordon makes a case for the importance of wartime advances for increasing the longer-term productive capacity of the American economy. But economist Alexander J. Field argues that the foundations for the golden age were already in place in 1941. In his excellent 2012 book A Great Leap Forward: 1930s Depression and U.S. Economic Growth, Field turns the standard account of the depressionary 1930s on its head, suggesting that the years between 1929 and 1941 were a time of remarkable technological progress rather than stagnation. In a recent paper, Field concludes that during the war, the shift to unfamiliar products and processes led to decreased total factor productivity in manufacturing. I think a more robust postwar economy with the war itself — assuming, of course, no need to defeat the Axis, a massive assumption — isn’t a crazy counterfactual. Not at all. And I think the argument is a good reminder about the disruptive impact of war.

Best of 5QQ

💡 5 Quick Questions for … economist Parker Rogers on FDA regulation

Parker Rogers is a PhD candidate in economics at the University of California, San Diego and author of “Regulating the Innovators: Approval Costs and Innovation in Medical Technologies.”

To what extent do the costs of regulations deter producers from medical product R&D?

My research on medical devices shows that regulatory barriers can deter medical product R&D by as much as 400 percent, depending on the types of regulations. It’s concerning that, despite our ability to have intelligent conversations with computers, we are still grappling with routine illnesses and diagnostic errors. I wouldn’t be surprised if the current regulatory framework plays a crucial role in this discrepancy.

💡 5 Quick Questions for … Tony Mills on metascience

It seems like there’s been more political interest in R&D funding since the pandemic. Is that cause for optimism?

I think a certain amount of realism is also needed when it comes to politics. Over the past few years, we had a lot of interest in increasing public investments in science and R&D generally. This actually predated the pandemic, but I think the pandemic kind of accelerated that in a lot of ways. And so that culminated, for example, in the CHIPS Act last year. At one point in time in the mid-20th century, the public sector was the largest funder of R&D, about two-thirds, and the private sector was about a third. And that's now flipped. There's a lot of desire to change that ratio. Were these legislative efforts going to move us in that direction? I think the reality is we have an R&D system that's very different than it was in the mid-20th century. And if you look at what in fact happened, the authorizations for funding, they were significant. They weren't as significant as it seemed they might be in the beginning, but they were never going to fundamentally restructure that R&D system. It's just way too much money for that to be possible. But then if you look at actually how much money was appropriated, it was even less. And so there was lots of highfalutin rhetoric about the return of public investment and science. There's a question about the political will to do some of these things. I think that one of the real virtues of metascience is that it helps us move past this preoccupation with funding as the controlling variable in all of this. And that's, I think, reason for optimism.

Best of the Pod

🤖 A conversation with economist Avi Goldfarb on the disruptive economic potential of AI

I think of the classic Paul David paper about the dynamo. It took a while before factories used electricity, and they actually had to redo how the factory was designed to get full productivity value. And you say that we are sort of in the “between times.” And that makes me think of a classic Solow paradox: We see computers everywhere but in the statistics. He said that in ’87. Are we, like, in the 1987 period with this technology? Or are we now in the late ‘90s where it's starting to happen and the boom is about to begin?

I think we're in the early ‘80s.

Not even the late ‘80s?

He said that in 1987. By 1990 it was showing up in the data. So he just missed it.

[We’re in the early 1980s] in the sense that we don't quite know what the organization of the future looks like. There are reasons to think for many industries it might take a long time, like many years or decades, for it to show up in the productivity stats. While I do say we're in the early ‘80s because we haven't figured it out yet, I'm a little more optimistic that maybe it won't be 30 years to really have the impact. Mostly because we just have the lessons of history. We know from past technologies, and business leaders know from past technologies, electricity and the internet and the steam engine and others, that it requires some system-level change. And we now have the toolkits to think through, how do you build system-level change without destroying your company?

When electricity was diffusing in the 1890s, there wasn't really any idea that this might take 40 years to figure out what the factory of the future looks like. It just wasn't on anybody's mind. The management challenges of redesign were unstudied, and there was no easily accessible knowledge to figure that out. Jump forward to the ‘80s and computing: Again, we hadn't even learned the lessons of electricity back then. Paul David's paper came out in 1990. It was a solution to the Solow paradox.

But since then, we have a much better understanding of what's required for technological change. There has been decades of economics literature Erik Brynjolfsson, Tim Bresnahan, Paul David, and others. And there's been decades of management literature taking a lot of those ideas from econ and trying to communicate them to a broader audience to say, “Yes, it's hard. But doing nothing can also be a disaster. So being proactive is useful.” Then there's another piece about optimism here, which is that the entrepreneurial ecosystem is different than it used to be. And we have lots and lots of very smart people building tech companies, trying to make the system-level change happen. And that gives us more effectively more kicks at the can to actually figure out what the right system looks like.