💊 Bad innovation: the real economic story behind America’s opioid epidemic

Also: H.G. Wells and the long decline of techno-optimism

“After an excessively optimistic phase early in the post-World War II period, the intellectual climate surrounding the concept of modernization through economic and technological advancement has, until recently, tended to be excessively pessimistic.” - Herman Kahn, 1979

In This Issue

Micro Reads: Regulating AI; Washington vs AVs; education and IQ; and more . . .

Short Read: Bad innovation: the real economic story behind America’s opioid epidemic

Long Read: H.G. Wells and the long decline of techno-optimism

Micro Reads

🤖 Dangers of unregulated artificial intelligence - Daron Acemoğlu, VoxEU | In this analysis, the MIT economist (whose scholarship has stressed how AI can both automate and complement human work, as well as create new work) warns that “current AI technologies — especially those based on the currently dominant paradigm relying on statistical pattern recognition and big data — are more likely to generate various adverse social consequences, rather than the promised gains.” His tap-the-brakes solution: using regulation to slow down AI technologies where addressing the downside will “become politically and socially more difficult after large-scale implementation.” One Acemoğlu example: the impact of social media on political discourse and democratic politics.

🚘 Elon Musk and the Coming Federal Showdown Over Driverless Vehicles - Adam Thierer and John Croxton, Discourse | The authors are worried that “a federal crackdown is coming for Tesla and driverless tech,” especially because “many Democratic lawmakers are feeling pressure from unions to slow the pace of vehicle automation, especially in trucking.”

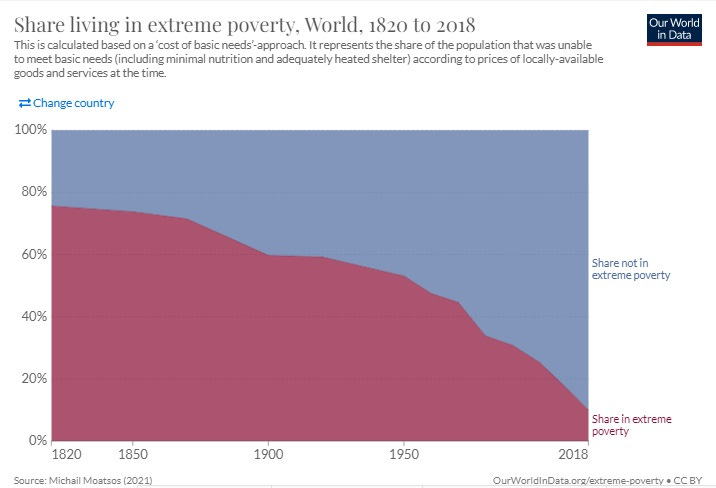

📈 Extreme poverty: how far have we come, how far do we still have to go? - Max Roser, Our World in Data | From the must-read piece: “Two centuries ago the majority of the world population was extremely poor. . . . But even after two centuries of progress, extreme poverty is still the reality for every tenth person in the world. . . . The poorest people today live in countries which have achieved no growth. This stagnation of the world’s poorest economies is one of the largest problems of our time. Unless this changes millions of people will continue to live in extreme poverty.”

👶 Americans say they’re not planning to have a child, new poll says, as U.S. birthrate declines - The Washington Post | The share of women between the ages of 18 to 49 and men between 18 and 59 who said they were “not too likely” to have kids grew to 21 percent in 2021 vs. 16 percent in 2018. But the stat that really caught my attention was that 56 percent of the “not at all or not too likely” group said it’s because they just don’t want them, down from 63 percent in 2018. “This time around, 43 percent cited other reasons including medical issues, economic or financial reasons, and lack of partner.”

⚡ The race to train a new cohort of electric vehicle mechanics - Andy Palmer, Financial Times | The author, who is CEO of Switch Mobility and previously launched the Nissan Leaf while COO of Nissan, gives a good example of how maximizing value from tech advances requires plenty of investment in human capital: “A modern car contains around 100m lines of code. To put that into context, a top-spec airliner has 14m lines. In the next decade, it is estimated that most passenger vehicles will need around 300m lines of software to keep them on the road.”

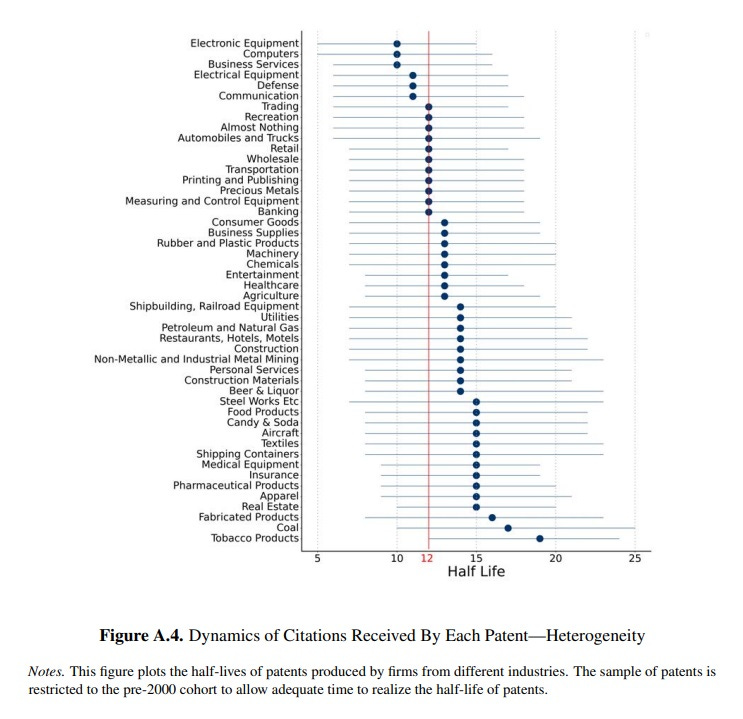

☎ Technological Obsolescence - Song Ma (Yale University), NBER | When it comes to the half-life of innovation, at least measured by patents, obsolescence happens quickly with tech, less so with coal — as you might guess.

🧠 How Much Does Education Improve Intelligence? A Meta-Analysis - Stuart J. Ritchie (University of Edinburgh) and Elliot M. Tucker-Drob (University of Texas at Austin) | While many wonder about the potential of genetic tinkering to boost human intelligence, it seems we already have a pretty good method of doing so. From the 2019 paper (thanks to Ethan Mollick for the pointer):

Across 142 effect sizes from 42 data sets involving over 600,000 participants, we found consistent evidence for beneficial effects of education on cognitive abilities of approximately 1 to 5 IQ points for an additional year of education. Moderator analyses indicated that the effects persisted across the life span and were present on all broad categories of cognitive ability studied. Education appears to be the most consistent, robust, and durable method yet to be identified for raising intelligence.

Short Read

💊 Bad innovation: the real economic story behind America’s opioid epidemic

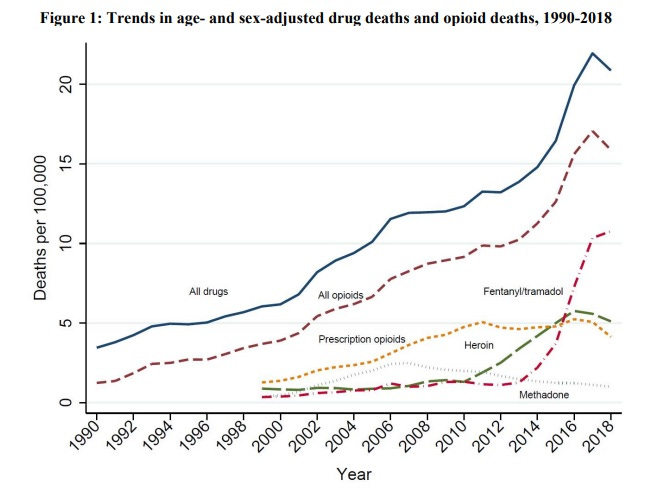

More than 100,000 Americans died of overdoses in the 12-month period that ended in April, according to provisional figures from the National Center for Health Statistics. That’s up almost 30 percent from the prior year. A big reason for those overdoses? “The rise in deaths — the vast majority caused by synthetic opioids — was fueled by widespread use of fentanyl, a fast-acting drug that is 100 times as powerful as morphine. Increasingly fentanyl is added surreptitiously to other illegally manufactured drugs to enhance their potency,” reports The New York Times.

As the NYT pieces goes on to note, the initial addiction frequently begins with legal prescriptions given by medical providers. Likewise, when you dig down to the initial spark of the opioid epidemic, one finds a story of legal innovation and entrepreneurship gone terribly wrong. Although I have mentioned this paper before, I think the devastating overdose news makes it worthwhile to take another look at “When Innovation Goes Wrong: Technological Regress and the Opioid Epidemic” by Harvard University economists David M. Cutler and Edward L. Glaeser. (The chart below is from the paper. Also, if you’re interested in a superdeep dive, I had a great Q&A with Glaeser back in June.)

After giving a fascinating history of humanity’s relationship with the opium poppy plant, Cutler and Glaeser address the 21st-century opioid crisis that caused a fourfold increase in opioid deaths between 2000 and 2017, but really beginning with the availability of OxyContin in 1996. Their research finds commonly cited causes such as societal changes in “pain, negative affect, despair, and economic insecurity” aren’t the main cause of the addiction surge. Those factors are responsible for only one quarter of the increase in prescription pain reliever use. So even though much of the coverage of the opioid epidemic has focused on globalization-spurred economic hardship in “left behind” Rust Belt regions of America, the impact of that economic stress on opioid overdose deaths is “modest,” Cutler and Glaeser conclude.

The more important story is this (bold by me):

The dominant changes in opioid supply started with modest technological and marketing innovations in the legal sector, which was followed by a burst of entrepreneurship in the illegal sector. In the legal market, physicians who cared about treating the impaired were persuaded by a time release system and a highly effective-marketing campaign that the new opioids were truly safer than the older ones, and they started prescribing. While the opioid crisis did not begin with supply shifts in the illegal market, technological and institutional changes within that market furthered the epidemic. The introduction of fentanyl and the rise of Asian fentanyl exports appears to be a narcotic variant of the China trade shock, where declining transport costs and East Asian industrial expertise flooded American markets and displaced the opium producers of Mexico.

And despite a sharp reduction in legal opioid prescriptions since 2011, the epidemic is ongoing, with the authors giving little reason for near-term optimism:

America’s battle with opioids is not over. A movement to aid a population suffering from chronic pain has become a national crisis, with pain, despair, and entrepreneurship mixed in an unholy brew. The government may assert its authority over legal opioids, but it seems unable to stem most of the current illegal market supply, which increasingly comes in small shipments from Asia or Mexico. Even a pandemic could not slow the deaths from opioid drugs.

The Long Read

☀ H.G. Wells and the long decline of techno-optimism

The New York Times review of the new biography The Young H.G. Wells by Claire Tomalin has prompted a bit of a social media kerfuffle due to this line: “With Jules Verne and the publisher Hugo Gernsback, [Wells] invented the genre of science fiction.” Not so quickly replied the Twitterverse, pointing out that Mary Shelley wrote Frankenstein in 1818, nearly a half century before Wells was born. And while I know Shelley, I was unaware of English writer Margaret Cavendish, the Duchess of Newcastle, whose 1666 work The Blazing World is cited by some as proto-science fiction.

In any event, Wells’ early and long-lasting influence on the genre is undeniable. He shaped it into a form that’s totally recognizable to fans today. Hollywood, for instance, returns again and again to the foursome of novellas that Wells wrote in the last decade of the 19th century — The Time Machine, The Island of Doctor Moreau, The Invisible Man, and finally The War of the Worlds — both remaking and reimagining them for a modern audience. Wells, the review points out, “created the classic templates for every story that has been written about alien invasion . . . and time travel (he was the first to imagine such travel made possible by a machine).”

But Wells wrote a lot more than those oft-filmed favorites. And it’s really his lesser-known works of fiction and non-fiction in the early 20th century that cemented his position as a futurist — one with a clear utopian bent — and as an important early 20th century public intellectual. In 1901, the 34-year-old Wells wrote Anticipations of the Reaction of Mechanical and Scientific Progress upon Human Life and Thought. It was his first non-fiction bestseller, one that aimed to explain "the way things will probably go in this new century." Wells envisioned a world of megacities, individual transportation by automobile, mechanized war, and eventually a one-world government.

A year later, Wells followed with The Discovery of the Future. Unlike the forecast-heavy Anticipations, Discovery makes the case that thinking about the future and trying to predict its path is an intellectually reputable pursuit. The essay oozes contempt for those “who regard the future as a perpetual source of convulsive surprises, as an impenetrable, incurable, perpetual blankness.” Those two books, as well as later novellas such as Men Like Gods from 1923 ( 3,000 years from now humanity has evolved into a race of spacefaring telepaths) and The Shape of Things to Come in 1933 (an enlightened dictatorship run by aviators tries to rescue a world devastated by economic depression and plague), really captured the Wellsian philosophy, which his friend George Orwell succinctly described as this: “Science can solve all the ills that humanity is heir to, but that man is at present too blind to see the possibility of his own powers.” Orwell, of course, later wrote a book that depicted the downside of scientific progress, especially the ability to create two-way interactive telescreens.

Yet by the end of his life, even Wells no longer seemed so Wellsian. Mind at the End of Its Tether, published in 1945 — Wells died a year later — was a meditation on “both the end of the world and the end of its author as paired events,” writes Sarah Cole in Inventing Tomorrow: H.G. Wells and the Twentieth Century, a literary analysis. It’s the last testament of the greatest futurist of his age whose utopian vision was shattered by two global wars and a global depression. His last bit of remaining hope depended on the power of Darwinian evolution to elevate a vanguard to lead forward the rest of humanity:

The writer sees the world as a jaded world devoid of recuperative power. In the past he has liked to think that Man could pull out of his entanglements and start a new creative phase of human living. In the face of our universal inadequacy, that optimism has given place to a stoical cynicism. The old then behave for the most part meanly and disgustingly, and the young are spasmodic, foolish and all too easily misled. Man must go steeply up or down and the odds seem to be all in favour of his going down and out. If he goes up, then so great is the adaptation demanded of him that he must cease to be a man. Ordinary man is at the end of his tether. Only a small, highly adaptable minority of the species can possibly survive.

Wells was hardy the only pessimist at the end of the war. Many economists, for instance, thought the cessation of hostilities would spark the recommencement of the Great Depression. Paul Samuelson, the future Nobel laureate from the Massachusetts Institute of Technology, famously predicted “the greatest period of unemployment and industrial dislocation which any economy has ever faced.”

But instead of Great Depression 2.0, America experienced what is now called a “golden age” of prosperity. A boom time for tech progress, too, with the start of the Atomic and Space Ages. There was also another wave of optimistic futurism that Wells would have approved of. But this futurism was no longer only an artisanal effort at forecasting by sci-fi writers. Thinking hard about the future took a serious turn following World War II, given the global convulsions of that conflict, the new risk of nuclear war, and what seemed like neverending, breakneck technological change. Futurism or futurology — academic and systematic thinking about the future — became respected input for policymakers. Indeed, many of the rosy forecasts of the time can be seen in the great piece of post-war futurism, The Jetsons.

Unfortunately, that golden age started fading fast by the late 1960s. Frequent readers of this newsletter know what came next. Silent Spring. A productivity downshift. Oil shocks. Inflation. Stagflation. Eco-pessimism. The Population Bomb. The Limits to Growth. The Long Stagnation. And while all this was happening, America’s futurists became a pessimistic lot, seeing a world of scarcity rather than abundance — and promoting anti-market central planning to enforce a world of lowered expectations. And as that happened, their influence faded. There were too many failed forecasts, too many gloomy forecasts, and a loss of confidence in government (as well as expertise more broadly). While fiction about the future remains popular, the future has withered as a subject of formal and scholarly study that is seriously considered by government — at least in the United States. As The Economist reported back in 2007:

The word “futurologist” has more or less disappeared from the business and academic world, and with it the implication that there might be some established discipline called “futurology”. Futurologists prefer to call themselves “futurists”, and they have stopped claiming to predict what “will” happen. They say that they “tell stories” about what might happen. There are plenty of them about, but they have stopped being famous. You have probably never heard of them unless you are in their world, or in the business of booking speakers for corporate dinners and retreats.

Today, the most influential thinkers about the future seem to be Silicon Valley entrepreneurs and Hollywood film and television creators. The former more upbeat, the latter more dour. I would love to see the rise of a new generation of forward-looking thinkers able to present a broad, techno-solutionist view of tomorrow, an image of a future we would want to inhabit. (Along those lines, it’s worth checking out my recent Q&A with Azeem Azhar, founder of Exponential View, where his podcast and newsletter deliver in-depth tech analysis, and author of the newly released The Exponential Age: How Accelerating Technology is Transforming Business, Politics, and Society.) A strong Wellsian optimistic streak would be required, to be sure. But also needed: humility about our ability to top-down prescriptively plan that future (Wells was a Fabian socialist, at least for a time, and an advocate of world government) versus creating a fertile ecology for discovery, invention, innovation, and growth. Given the gloom about climate change, pandemics, and the decline of democracy, I think we could all use a bit more of this:

Everything seems pointing to the belief that we are entering upon a progress that will go on, with an ever-widening and ever more confident stride, forever. . . . All this world is heavy with the promise of greater things, and a day will come, one day in the unending succession of days, when beings, beings who are now latent in our thoughts and hidden in our loins, shall stand upon this earth as one stands upon a footstool, and shall laugh and reach out their hands amid the stars.

That final quote, along with Cabal’s final conversation with Passworthy in the movie version of “Things to Come” has always been a favorite. The below conversation ending that film is as good a description of Wellsianism as any, and for myself has a kind of religious stature, like I imagine a psalm may be for those so inclined. I even had the final line engraved on some of my past smartphones and likely will have it inscribed on a ring sometime soon.

The ending of “Things to Come”, 1936:

Raymond Passworthy: Oh, God, is there ever to be any age of happiness? Is there never to be any rest?

Oswald Cabal: Rest enough for the individual man -- too much, and too soon -- and we call it death. But for Man, no rest and no ending. He must go on, conquest beyond conquest. First this little planet with its winds and ways, and then all the laws of mind and matter that restrain him. Then the planets about him and at last out across immensity to the stars. And when he has conquered all the deeps of space and all the mysteries of time, still he will be beginning.

Raymond Passworthy: But... we're such little creatures. Poor humanity's so fragile, so weak. Little... little animals.

Oswald Cabal: Little animals. If we're no more than animals, we must snatch each little scrap of happiness and live and suffer and pass, mattering no more than all the other animals do or have done. Is it this? Or that? [gesturing at the stars] All the universe? Or nothingness? Which shall it be, Passworthy? Which shall it be?